TL;DR:

The Anthropic API provides access to Claude, a state-of-the-art AI model suite. Claude 4.5 (released Nov 2025) costs up to 66% less than prior generations while offering superior reasoning for coding, research, and agents. Learn setup, pricing, cost optimization, and multi-model integration strategies.

Introduction

The AI landscape shifted dramatically in November 2025 when Anthropic released Claude 4.5 across its Opus, Sonnet, and Haiku families. For developers and organizations, this update delivered an unprecedented combination: more intelligent models at dramatically lower costs. The flagship Claude Opus 4.5 now costs $5/$25 per million tokens—a 66% reduction from its $15/$75 predecessor—while setting new benchmarks in coding, reasoning, and autonomous agent tasks.

If you've held off integrating Claude into your applications due to cost concerns, the economics have fundamentally changed. If you're already using the API, understanding the new pricing tiers and advanced features like batch processing and prompt caching can reduce your operational costs by 50–90%. For teams managing multiple AI model integrations across OpenAI, Google, and Anthropic, unified API platforms streamline development while maintaining full access to each provider's unique capabilities.

This comprehensive guide walks you through everything needed to evaluate, set up, and optimize the Anthropic API for your specific use case. Whether you're building a chatbot, analyzing documents, automating code tasks, powering AI agents, or integrating Claude alongside other leading AI models, you'll find actionable guidance backed by the latest official data and practical integration strategies.

Which Claude Models Is Right for You?

Anthropic offers three model tiers, each optimized for different workloads. The Claude 4.5 series (released November 2025) represents the latest generation, delivering significant improvements in reasoning, coding capability, and cost efficiency compared to earlier versions.

The Claude 4.5 Family (Current Generation)

Claude Opus 4.5: The Most Intelligent Model

Claude Opus 4.5 is Anthropic's flagship model, engineered for complex reasoning, advanced coding, and multi-step autonomous tasks. It excels at code generation, bug fixing, architectural design, and sustained reasoning over long conversations. Benchmarks show Opus 4.5 achieves 80.9% on SWE-bench Verified, making it the most capable coding model available today. It rivals or exceeds closed-source models from competitors for reasoning-intensive workloads.

Use Opus 4.5 when accuracy and depth matter more than speed: complex data analysis, multi-system debugging, strategic planning, building autonomous AI agents, or handling edge cases where lesser models might fail. Despite its premium capability, its pricing ($5/$25 per million tokens) makes it competitive with mid-tier models from 2024, representing exceptional value for enterprise applications.

Claude Sonnet 4.5: The Balanced Choice

Claude Sonnet 4.5 strikes an optimal balance between intelligence and speed, making it the go-to model for most production applications. It handles knowledge retrieval, summarization, content creation, customer support, and complex document analysis efficiently without sacrificing quality. A major advantage: Sonnet 4.5 supports up to 1 million token context windows at base pricing—a feature that enables processing entire codebases, lengthy legal documents, or comprehensive research archives in a single request.

For long-context requests exceeding 200K tokens, pricing increases to $6/$22.50 per million tokens—still exceptional value for document-heavy workflows. Sonnet 4.5 processes faster than Opus, making it ideal for real-time applications where response latency is critical. Many production teams standardize on Sonnet 4.5 for its reliability, speed, and cost balance.

Claude Haiku 4.5: The Speed Champion

Claude Haiku 4.5 is the fastest and most compact model in the Claude family, optimized for near-instant responsiveness. At $1/$5 per million tokens, it's the most cost-effective option for high-volume workloads. Haiku excels at classification, simple text analysis, quick summarization, content tagging, and rapid-response chatbot interactions. Its speed makes it ideal for interactive applications where milliseconds matter.

Use Haiku for tasks where speed and cost are paramount: live customer support, real-time content moderation, high-frequency API polling, or processing thousands of lightweight queries. While it's less capable than Sonnet or Opus for complex reasoning, it's perfectly suited to its design purpose and can handle surprisingly sophisticated tasks.

Legacy Models (Available but Not Recommended)

Claude Opus 4.1 and earlier versions remain accessible via the API at their original pricing ($15/$75 for Opus 4.1). These models carry a significant "legacy premium"—a single task that costs $0.10 on Opus 4.5 would cost $0.30 on Opus 4.1. For new integrations, the Claude 4.5 family is the logical default. Migration from legacy models to Claude 4.5 typically yields better performance at lower cost, making the upgrade path straightforward.

| Model | Input Cost (per MTok) | Output Cost (per MTok) | Best For | Context Window | Speed |

| Opus 4.5 | $5.00 | $25.00 | Complex reasoning, coding, agents | 200K | Slower |

| Sonnet 4.5 | $3.00 | $15.00 | Balanced, production workloads | 1M | Fast |

| Sonnet 4.5 (>200K tokens) | $6.00 | $22.50 | Long-context requests | 1M | Fast |

| Haiku 4.5 | $1.00 | $5.00 | Fast, cost-sensitive workloads | 200K | Fastest |

| Opus 4.1 (legacy) | $15.00 | $75.00 | Legacy systems only | 200K | Slower |

Unlocking Claude's Full Potential and Advanced Features

Beyond core API calls, Anthropic provides powerful add-on features that expand Claude's capabilities and enable sophisticated use cases.

Files API

Upload documents, images, PDFs, or code files once and reference them across multiple API calls. This reduces token consumption for repeated analyses and enables complex workflows like "analyze 10 PDFs for compliance issues" or "extract data from 50 invoices." Files are stored securely and referenced by ID, improving efficiency compared to embedding file contents in every request.

Vision Capabilities

Claude can analyze images and PDFs natively. Pass image data in your requests to enable tasks like screenshot analysis, document extraction, diagram interpretation, visual quality assessment, or accessibility description generation. This eliminates the need for separate OCR or image-processing services.

Tool Use and Computer Use

Claude can call external tools (Claude APIs, databases, calculators) or interact with web browsers autonomously. This enables agent-like behaviors where Claude decides when and how to use external resources. For example, Claude can check an API for current data, process it, and return results without explicit step-by-step instructions.

Skills and Memory

For autonomous agents, Claude can store and retrieve contextual information across sessions, enabling multi-step, persistent workflows without re-explaining context. This is essential for building AI systems that improve over time and maintain continuity across conversations.

Anthropic API Pricing: Breaking Down Costs and Optimization Strategies

The Anthropic API uses a token-based pricing model, where you pay separately for input tokens (your prompt) and output tokens (Claude's response). Understanding this structure is essential for budgeting, financial planning, and cost management at scale.

Base Pricing: Simple and Transparent

Pricing scales with model capability. A token is approximately 0.75 words in English, so a 1,000-word document consumes roughly 1,333 tokens. Code and technical documentation tokenize differently due to punctuation and special characters. The cost formula is straightforward:

Total Cost = (Input Tokens ÷ 1,000,000 × Input Rate) + (Output Tokens ÷ 1,000,000 × Output Rate)

For example, a request with 10,000 input tokens and 2,000 output tokens on Claude Sonnet 4.5 costs:

-

Input: (10,000 ÷ 1,000,000) × $3 = $0.03

-

Output: (2,000 ÷ 1,000,000) × $15 = $0.03

-

Total: $0.06 per request

At scale, a team processing 1 billion tokens monthly on Sonnet would pay approximately $3,000–$15,000 depending on input/output mix. This transparency makes budgeting predictable, unlike some competitors with hidden surcharges or minimum commitments.

Advanced Cost Optimization: Cut Costs by 50–90%

Three powerful mechanisms let you dramatically reduce token costs without sacrificing capability or output quality:

Batch API (50% Flat Discount)

For non-urgent workloads, the Batch API processes requests asynchronously and charges a flat 50% discount on all token costs. If you're generating reports overnight, analyzing historical data, processing weekly analytics, or running scheduled data extraction jobs, batch processing cuts costs in half with typically 1-hour turnaround times.

Example: 500M tokens on Sonnet 4.5

-

Standard pricing: $1,500 + $7,500 = $9,000

-

Batch pricing: $4,500 (50% savings)

This feature is invaluable for cost-conscious teams processing predictable, non-real-time workloads.

Prompt Caching (Up to 90% Savings)

For workflows involving repeated context—such as querying the same PDF multiple times, building systems that reuse system prompts, or analyzing the same codebase with different instructions—prompt caching is transformative. After the first request (which incurs a 25% write penalty), cached tokens cost only 10% of the base input rate.

Example: Processing the same 100-page PDF with 5 different queries

-

First request: 100K context tokens at 1.25× rate = $0.375 (Sonnet)

-

Remaining 4 requests: 100K cached tokens at 0.1× rate = $0.30 each

-

Total: $1.575 instead of $1.875 (20% savings); scales to 90% on high-reuse patterns

This feature alone can transform the economics of document-heavy applications, RAG systems, and knowledge-base queries.

Extended Thinking (Reasoning with Transparency)

Extended thinking allows Claude to "think out loud" before answering, improving output quality for complex problems. While thinking tokens cost extra (charged as output tokens), they often reduce total tokens needed because the model can reason more efficiently and avoid errors requiring re-prompting.

You set a minimum thinking budget of 1,024 tokens. For truly complex tasks, the thinking investment pays for itself through better, more accurate responses. This is especially valuable for code generation, mathematical reasoning, and multi-step problem solving.

When to Use Each Model: A Practical Decision Framework

Choosing the right model directly impacts cost and performance. Here's a simple framework for model selection:

-

Use Haiku 4.5 for: Real-time chat, classification, content moderation, summarization, high-volume filtering, sentiment analysis

-

Use Sonnet 4.5 for: Production applications, knowledge retrieval, content creation, document analysis, general-purpose reasoning, customer support automation

-

Use Opus 4.5 for: Complex multi-system debugging, advanced research, code architecture design, autonomous agents, tasks where accuracy is non-negotiable, novel problem-solving

A cost-conscious strategy: Start with Haiku for the first attempt. If responses are insufficient, upgrade to Sonnet. Reserve Opus for tasks where Sonnet demonstrably falls short or where error rates are unacceptable. This tiered approach optimizes cost without sacrificing quality.

How to Get Your Anthropic API Key?

Getting started with the Anthropic API requires an API key. This process takes less than 10 minutes and is straightforward for any developer.

-

Visit the Anthropic Console: Go to https://platform.claude.com/ and create a developer account with a valid email address.

-

Verify Your Email: Anthropic sends a verification link; click it to activate your account and unlock API access.

-

Navigate to API Keys: In the console sidebar, find "API Keys" under the Settings section.

-

Generate a New Key: Click "Create Key" and name it descriptively (e.g., "Development," "Production," or "Research").

-

Secure Your Key: The key displays once. Treat it like a password—never share it publicly, commit it to version control, embed it in client-side code, or expose it in logs. If compromised, delete it immediately and generate a replacement.

Important: Store your API key as an environment variable. In your application, retrieve it via process.env.ANTHROPIC_API_KEY (Node.js) or os.getenv("ANTHROPIC_API_KEY") (Python) rather than hardcoding it. This prevents accidental exposure in code repositories.

import os

from anthropic import Anthropic

#Retrieve API key from environment

api_key = os.getenv("ANTHROPIC_API_KEY")

client = Anthropic(api_key=api_key)

Making Your First Anthropic API Request

Here's a minimal Python example to verify your setup and start building:

import os

from anthropic import Anthropic

# Initialize client with API key from environment

client = Anthropic(api_key=os.getenv("ANTHROPIC_API_KEY"))

# Make your first API request

message = client.messages.create(

model="claude-sonnet-4-5-20250929",

max_tokens=1024,

messages=[

{"role": "user", "content": "Explain the Anthropic API in 100 words."}

]

)

# Print the response

print(message.content[0].text)

Save this as test_api.py, set your environment variable (export ANTHROPIC_API_KEY=your_key), and run python test_api.py. You should see Claude's response within seconds.

For complete setup instructions, code samples in Python, JavaScript, curl, and other languages, advanced patterns like streaming, and comprehensive API reference documentation, see the official Anthropic API documentation.

Integrating the Anthropic API with GPT Proto Unified AI Platforms

While direct integration with the Anthropic API is straightforward, many development teams manage multiple AI APIs from OpenAI, Google, Anthropic, and other providers simultaneously. This creates integration complexity: different authentication methods, inconsistent request/response formats, separate rate-limit management, and juggling multiple API keys.

The Unified API Platform Advantage

A unified AI API platform like GPT Proto aggregates multiple leading models—Claude, GPT-4, Gemini, and others—under a single consistent interface. This approach offers significant operational and development advantages:

Simplified Development

Instead of learning Anthropic's request format, OpenAI's format, and Google's format separately, developers write once against a unified API. The same request structure works across all integrated models. Switching from Claude Sonnet to GPT-4o requires changing a single parameter rather than refactoring request code.

# Unified API: Same request structure for all models

response = client.messages.create(

model="claude-sonnet-4-5-20250929", # Switch to "gpt-4o" without changing code structure

messages=[{"role": "user", "content": "Explain quantum computing"}],

max_tokens=1024

)

Centralized Key Management

Instead of storing API keys for OpenAI, Anthropic, Google Gemini, and others across your environment, you manage a single unified platform key. This reduces security surface area and simplifies key rotation, limiting, and auditing.

Flexible Model Switching

Run A/B tests comparing Claude Sonnet against GPT-4o Mini without rewriting integration code. Route requests based on cost, latency, or availability. If one provider experiences downtime, automatically fall back to another model. This flexibility is impossible with direct provider integration.

Cost Intelligence and Optimization

Unified platforms provide cross-provider cost tracking, showing which models are used, which provide best value for your workloads, and where you can optimize spending. Many platforms offer volume discounts or negotiated rates unavailable to individual developers.

Advanced Routing and Load Balancing

Sophisticated platforms can route requests based on:

-

Task complexity (use Haiku for simple tasks, Opus for complex ones)

-

Cost budgets (fall back to cheaper models if limits approached)

-

Latency requirements (route time-sensitive requests to fastest models)

-

Model specialization (route code tasks to specialized code models)

When to Use a Unified Platform vs. Direct Integration

Use direct Anthropic API integration if:

-

You exclusively use Claude and have no plans to integrate other models

-

You need maximum customization or access to bleeding-edge features before platform support

-

You want the lowest latency and full control over API behavior

-

Your use case is simple and doesn't require multi-model management

Use a unified platform like GPT Proto if:

-

You use multiple AI models (Claude + GPT-4 + Gemini) in the same application

-

You want flexibility to switch models without rewriting code

-

Cost optimization across models is important

-

You need load balancing, failover, or intelligent routing

-

Your team prefers unified tooling and API management

-

You want simplified key management and usage tracking

-

You're evaluating different models and need easy A/B testing

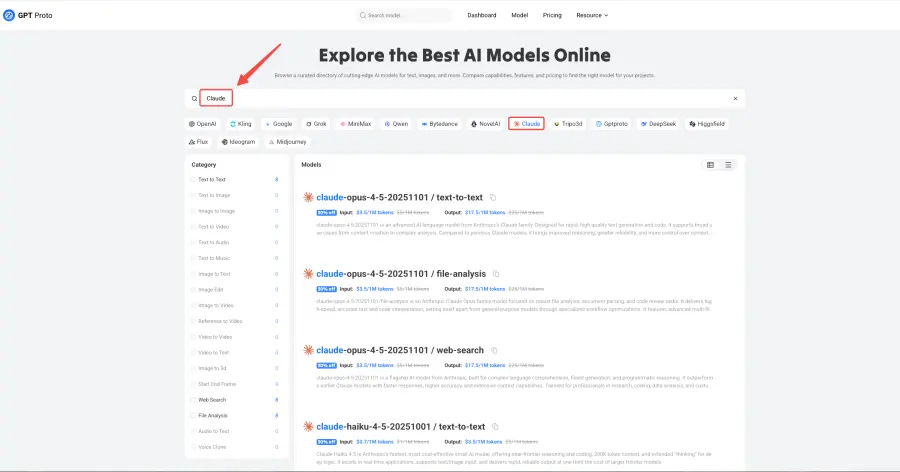

Access Anthropic API with GPT Proto

GPT Proto is a unified AI API aggregation platform supporting Claude 4.5, GPT-5, Gemini 2.5, and 100+ other models through a single consistent interface.

Step-by-Step Guide:

-

Create an account at https://gptproto.com/

-

Generate a GPT Proto API key in your dashboard

-

Set environment variable:

export GPTPROTO_API_KEY=your_key -

Use any model through the same request format

from anthropic import Anthropic

# Initialize with GPT Proto endpoint instead of Anthropic's

client = Anthropic(

api_key="your_gptproto_api_key",

base_url="https://api.gptproto.com/v1" # GPT Proto's unified endpoint

)

# Use any model available on the platform

response = client.messages.create(

model="claude-sonnet-4-5-20250929", # Anthropic

messages=[{"role": "user", "content": "Hello Claude"}]

)

# Switch to another model with one line change

response2 = client.messages.create(

model="gpt-4o", # OpenAI

messages=[{"role": "user", "content": "Hello GPT-4"}]

)

Benefits of GPT Proto for Anthropic Users:

-

Multi-model access: Claude + GPT-4 + Gemini all through one key

-

Competitive pricing: Often lower rates than direct provider access due to volume

-

Advanced routing: Automatic model selection based on cost, speed, or capability

-

Unified dashboard: Single view of usage, costs, and requests across all models

-

Failover support: If Anthropic experiences issues, automatically retry with alternative models

-

Developer-friendly: Web interface, API documentation, code samples, and community support

Real-world example: A startup building a customer support AI might use GPT Proto to:

-

Route simple questions to Haiku (fast, cheap)

-

Escalate complex issues to Sonnet (balanced capability)

-

Use GPT-4o for highest-priority customer cases

-

A/B test which model produces best support satisfaction

-

Track costs per use case and optimize accordingly

FAQs

Is the Anthropic API free?

No, the API operates on a pay-as-you-go model billed per token based on your usage. However, Anthropic offers free credits for new developer accounts, allowing you to experiment at no cost. The web interface at https://claude.ai/ offers limited free usage (separate from API usage), while API access requires a paid account.

Can I use the Anthropic API without an API key?

No, an API key is mandatory for authentication, authorization, and billing purposes for every API call. Your key grants access to your account and enables accurate usage tracking and billing.

How does Claude compare to OpenAI's GPT-4?

Claude Sonnet 4.5 and GPT-4 are highly competitive. Claude excels at reasoning, coding (Opus 4.5 scores 80.9% on coding benchmarks), and safety. Claude Sonnet 4.5 costs $3/$15 per million tokens vs. GPT-4's higher pricing. GPT-4 may have advantages in specific domains or languages. Many teams use both, leveraging each model's strengths. Direct comparison depends on your specific use case.

What happens if I exceed my token limit?

Your requests may be throttled or rejected with a 429 error until your monthly limit resets or you upgrade your account tier. The Anthropic Console shows real-time usage, allowing you to monitor consumption and adjust behavior before limits are reached. Enterprise accounts can request custom limits.

Should I use batch processing or standard API?

Use batch for non-time-sensitive workloads (reports, analysis, overnight processing, scheduled jobs) where 50% cost savings justify 1-hour delays. Use standard API for real-time applications, interactive workflows, or time-sensitive tasks where immediate response is critical. The 50% cost reduction makes batch ideal for high-volume analytics and data processing.

Is GPT Proto compatible with my existing Anthropic code?

Yes. GPT Proto is compatible with the official Anthropic Python SDK and supports the same request format. Simply change the base_url parameter when initializing the client. No code changes needed—your existing Anthropic integrations work immediately with GPT Proto.

Can I use Claude offline?

No, the Anthropic API requires an internet connection and API authentication. Claude is a cloud-based service without offline deployment options. For offline AI, consider open-source alternatives like Llama or Mistral.

Conclusion

The Anthropic API has become the most compelling choice for developers and organizations seeking frontier AI capability at sustainable costs. Claude 4.5's combination of intelligence, cost efficiency, and advanced features (batch processing, prompt caching, extended thinking) creates a powerful foundation for production AI systems that scale reliably and economically.

Whether you're prototyping an AI assistant, automating code tasks, powering autonomous agents, or building production systems, the Anthropic API delivers the tools and pricing structure to succeed. Start with a small experiment—generate your API key, make your first request, and explore Claude's capabilities. As you scale, leverage batch processing and prompt caching to optimize costs dramatically. When complexity demands it, Opus 4.5 is there, offering frontier reasoning at prices that now rival mid-tier models from competitors.

For teams managing multiple AI models, unified GPT Proto AI API Platform reduce integration complexity, improve cost optimization, and provide flexibility to switch models without rewriting code. The future of AI application development is faster, cheaper, smarter, and more flexible with Claude and unified API management.

Your next breakthrough application might be just an API call away. Begin today.