TL;DR:

DeepSeek V4, launching around mid-February 2026, is a specialized coding AI model expected to outperform Claude and ChatGPT in programming tasks. Featuring revolutionary mHC architecture and Engram memory system, it can process 1+ million tokens, run on consumer hardware, and maintain DeepSeek's tradition of cost-efficient, open-source innovation.

Introduction

The AI world is buzzing with anticipation as Chinese AI startup DeepSeek prepares to launch its next flagship model: DeepSeek V4. Expected to arrive around mid-February 2026, coinciding with the Lunar New Year, this new model represents a significant step forward in artificial intelligence development. Unlike general-purpose AI assistants, DeepSeek V4 is specifically designed to excel at programming and code generation. According to internal testing, this model is expected to surpass the coding capabilities of industry leaders like OpenAI's ChatGPT and Anthropic's Claude. What makes this release particularly notable is DeepSeek's commitment to keeping the model open-source and accessible, continuing its tradition of making advanced AI available to the broader developer community.

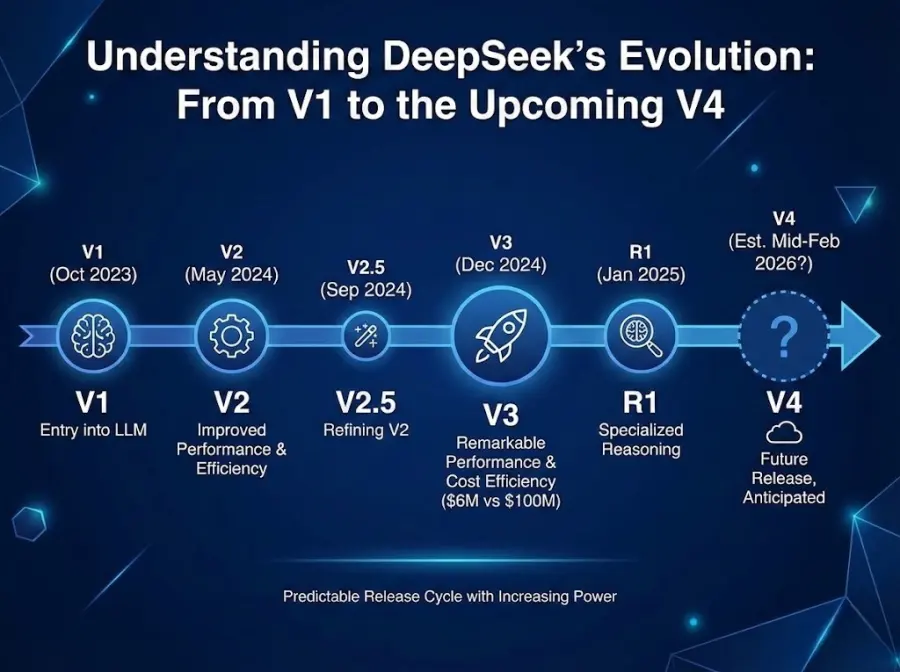

Understanding DeepSeek's Evolution: From V1 to the Upcoming V4

DeepSeek has quickly become a major player in the AI landscape through a strategy of releasing increasingly powerful models at regular intervals.

Key releases from DeepSeek include:

-

DeepSeek V1 launched in October 2023, marking the company's entry into the large language model space

-

DeepSeek V2 arrived in May 2024, introducing improved performance and efficiency

-

DeepSeek V2.5 followed in September 2024, refining the previous version

-

DeepSeek V3 reached users in December 2024, achieving remarkable performance at a fraction of training costs compared to Western competitors

-

DeepSeek R1, released in January 2025, introduced specialized reasoning capabilities and captured global attention

The company trained V3 for approximately 6 million dollars, far less than the 100 million dollars spent on comparable models by competitors. This cost efficiency, combined with strong performance, has made DeepSeek a respected player in global AI development.

DeepSeek has established a predictable release cycle based on past behavior. Following releases in October 2023, May 2024, and December 2024, a seven-month interval suggests July 2025 as an alternative possibility, though mid-February 2026 aligns with multiple industry sources. The company remains characteristically quiet about official announcements, leaving the developer community to anticipate based on technical signals and leaked research papers.

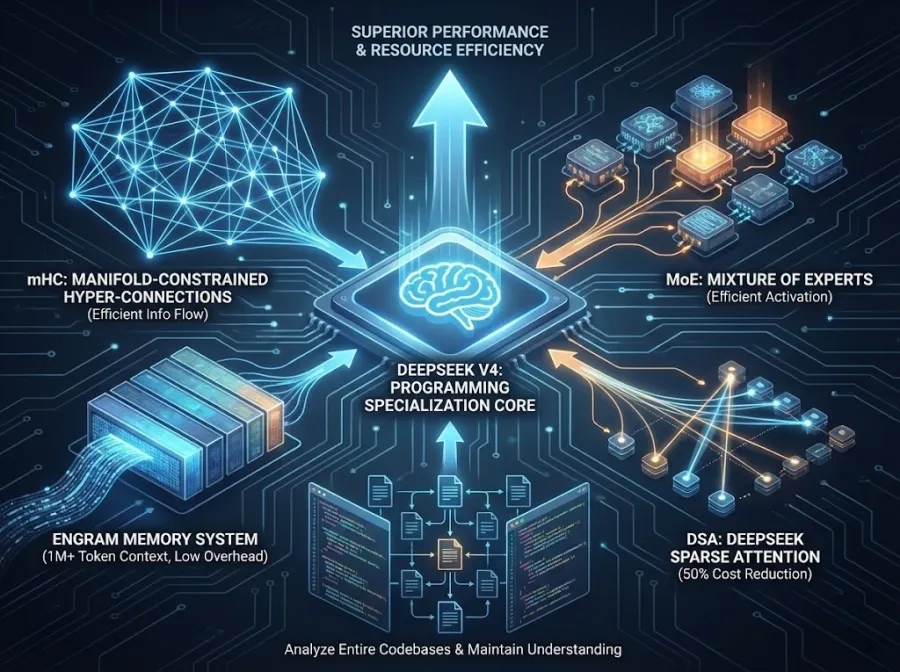

DeepSeek V4 Core Features and Architecture Predicted

DeepSeek V4 represents a meaningful shift in focus compared to previous models. While V3 was designed as a general-purpose language model, V4 narrows its expertise toward specialized programming tasks.

The architectural innovations include:

-

mHC Architecture: Manifold-Constrained Hyper-Connections enable information to flow more effectively across neural network layers, making the model smarter without requiring proportionally more computing resources

-

Engram Memory System: A conditional memory system designed to handle extremely long contexts and retrieve specific information from vast documents without computational overhead

-

Mixture of Experts (MoE): Continuing DeepSeek's proven approach, the model activates only necessary components for each task, improving efficiency

-

DSA (DeepSeek Sparse Attention): A 50% reduction in computational costs compared to standard approaches

These technological advances allow V4 to achieve superior performance while consuming fewer computational resources. The model can process more than one million tokens in a single context, meaning it can analyze entire codebases and maintain understanding across multiple files simultaneously.

DeepSeek V4 Repository-Level Coding - A Game-Changing Capability

One of the most anticipated features of DeepSeek V4 is its ability to understand code at the repository level. Unlike current models that excel at writing individual functions or small code snippets, V4 is designed to comprehend how changes in one file affect other parts of a project.

This capability addresses a real bottleneck in AI-assisted software development. A developer can ask V4 to fix a bug that spans multiple files, and the model can trace dependencies across an entire codebase, understanding the relationships between components. This moves AI coding assistance closer to what experienced human engineers actually need.

DeepSeek V4 Hardware Requirements - AI Without Breaking the Bank

A distinctive aspect of DeepSeek's philosophy is making powerful models accessible to developers without massive computing budgets.

DeepSeek V4 is designed to run on consumer-grade hardware:

-

Dual NVIDIA RTX 4090 graphics cards

-

Single NVIDIA RTX 5090 (the latest generation)

-

Standard enterprise data center configurations

DeepSeek expects to release lighter versions shortly after the main launch, such as DeepSeek-V4-Lite or Coder-33B, which will run on consumer GPUs with 24GB of memory. This accessibility approach contrasts sharply with the direction taken by some Western AI companies, which increasingly require expensive specialized hardware.

DeepSeek V4 vs. Claude vs. ChatGPT Comparison Predicted

How does the upcoming DeepSeek V4 stack up against existing leading models?

| Aspect | DeepSeek V4 (Predicted) | Claude 3.5 Sonnet | ChatGPT-4o |

| Specialization | Coding-focused | General-purpose | General-purpose |

| Context Window | 1M+ tokens | 200K tokens | 128K tokens |

| Code Generation | Excellent (repo-level) | Excellent | Good |

| Reasoning | Strong | Excellent | Good |

| Open Source | Yes | No | No |

| Training Cost | Estimated low | Undisclosed | Undisclosed |

| Hardware Access | Consumer-friendly | API-only | API/paid subscription |

DeepSeek V4's internal benchmarks reportedly show it outperforming both Claude and GPT-4 specifically on coding tasks. However, Claude remains superior for general reasoning, and ChatGPT offers better versatility across diverse applications.

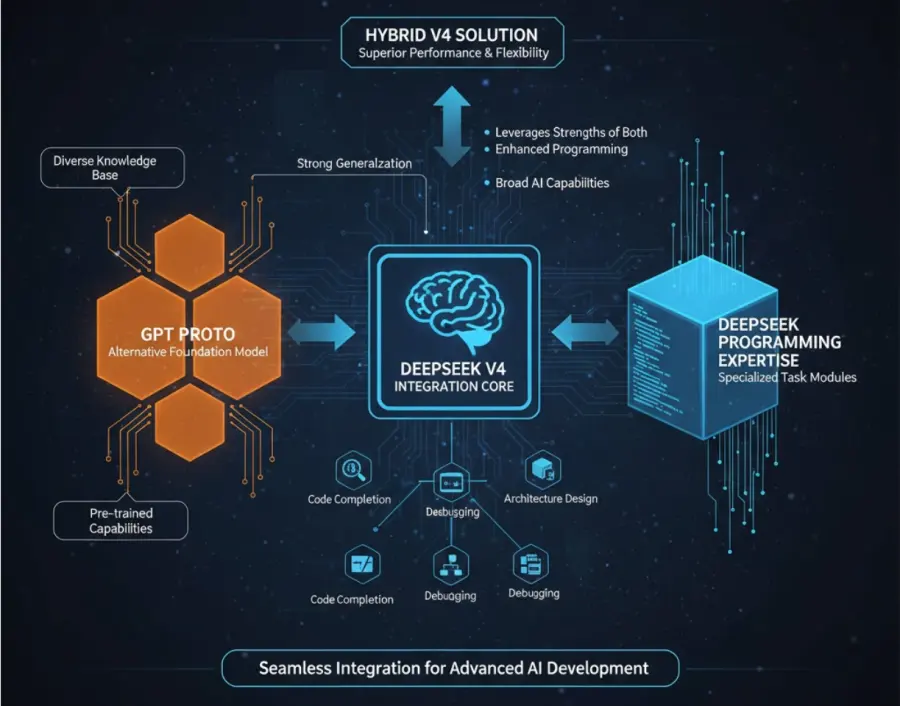

DeepSeek V4 Alternative Solution - GPT Proto for DeepSeek V4 Integration

For developers planning to integrate DeepSeek V4 into their projects, GPT Proto offers a practical solution that simplifies the technical complexity.

GPT Proto is a unified API platform that aggregates access to numerous AI models—including OpenAI's GPT series, Google's Gemini, Anthropic's Claude, and other leading models—through a single, standardized interface. When DeepSeek V4 launches, GPT Proto is committed to supporting the new model integration in the shortest possible timeframe, with all previous DeepSeek versions already available on the platform.

The platform solves several real problems developers face. Instead of managing multiple API keys for different AI services, you work with one key. Instead of learning different API documentation for each provider, you use OpenAI-compatible formatting. The pricing is also notably cheaper—approximately 40 to 60 percent off official rates—thanks to GPT Proto's aggregated volume purchasing approach.

Beyond cost savings, GPT Proto provides intelligent failover systems. If one AI provider experiences an outage, your requests automatically route to backup models, ensuring your application continues running smoothly. For teams building production systems that rely on AI, this reliability feature eliminates the risk of service disruptions.

Tips: For more informations about GPT Proto, you can have a check: https://gptproto.com/blog/gpt-proto-review

FAQs About DeepSeek V4

Will DeepSeek V4 really be free to use?

DeepSeek has maintained open-source licenses for previous models, released under the MIT License. While internal details remain private, the company's track record suggests V4 will follow the same pattern, making it accessible to developers without licensing fees. However, using the model requires computing resources, either through cloud APIs or local hardware.

Can I run DeepSeek V4 on my personal computer?

Full-scale V4 will require substantial hardware—likely 350GB to 400GB of VRAM for optimal performance. However, DeepSeek plans to release lighter versions (such as Coder-33B) that can run on a single consumer GPU with 24GB of memory. Most developers will access V4 through APIs rather than running the full model locally.

How will DeepSeek V4 handle non-English code?

While specific details haven't been confirmed, DeepSeek's previous models have supported multiple programming languages including Python, JavaScript, Java, C++, and others. V4 is expected to maintain this multilingual capability, though the company will likely emphasize particularly strong performance with popular languages.

Conclusion

DeepSeek V4 represents a meaningful milestone in making advanced AI capabilities accessible to the global developer community. By focusing specifically on code generation and understanding, the model addresses real needs in software development workflows. Its revolutionary architecture—combining mHC and Engram technologies—demonstrates that innovation in AI doesn't require unlimited resources. The upcoming release will likely accelerate adoption of AI-powered coding tools among developers who previously felt locked out by cost or complexity. When combined with platforms like GPT Proto that simplify integration and reduce expenses, DeepSeek V4 has the potential to reshape how developers approach writing, debugging, and maintaining software. Whether you're an independent developer or part of a larger team, the arrival of DeepSeek V4 gives you another powerful option for leveraging AI in your work. The competition between DeepSeek, Claude, and ChatGPT continues to push innovation forward, ultimately benefiting everyone who builds software.