Understanding the Evolution of the OpenAI API

The landscape of generative artificial intelligence moves at a blistering pace. Developers relying on the OpenAI API have witnessed remarkable shifts in capabilities and pricing structures recently. The OpenAI API now serves as the foundational backbone for countless enterprise applications globally. You can attribute this widespread adoption to the sheer reliability and continuous upgrades pushed directly to the OpenAI API endpoints.

Recently, the introduction of native audio handling dramatically altered how developers approach voice integration. The OpenAI API now processes raw audio streams with near-zero latency. This specific architectural shift makes the OpenAI API indispensable for building responsive, human-like voice agents. Furthermore, the OpenAI API supports advanced multi-modal workflows that process visual and auditory data simultaneously.

Why Developers Choose the OpenAI API

Integrating machine learning into production environments previously required specialized data science teams. The OpenAI API completely eliminates that massive barrier to entry. By sending a secure, standardized request to the OpenAI API, your application instantly gains access to cutting-edge reasoning engines. You focus entirely on building a phenomenal user experience while the OpenAI API handles the immense computational lifting.

Relying heavily on the OpenAI API means you never have to worry about server provisioning or global GPU shortages. The infrastructure powering the OpenAI API scales dynamically alongside your rapidly growing user base. Startups routinely prototype complex features using the OpenAI API over a single weekend. Once that specific feature goes viral, the exact same OpenAI API integration smoothly handles millions of concurrent global requests.

Control and customization represent another massive advantage associated with the OpenAI API. Unlike standard consumer-facing chat interfaces, the OpenAI API grants you granular authority over temperature settings, token limits, and system prompts. You can fine-tune the OpenAI API outputs to perfectly match your brand's specific tone of voice. This high level of precision explains exactly why Fortune 500 companies build their internal toolkits exclusively on the OpenAI API.

Core Capabilities of the OpenAI API

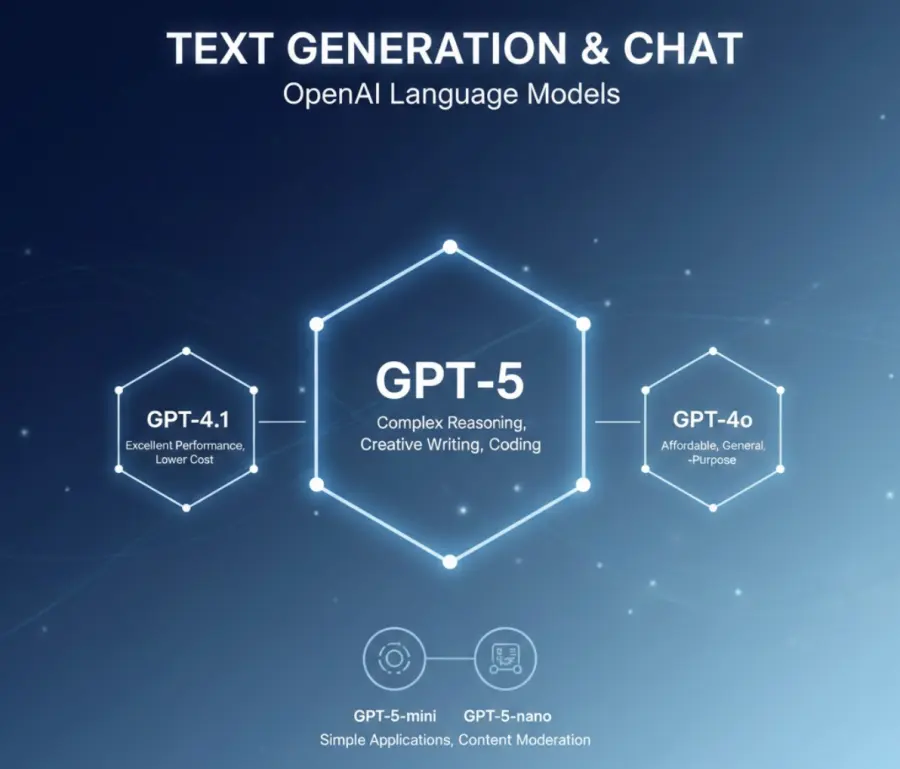

Text Generation via the OpenAI API

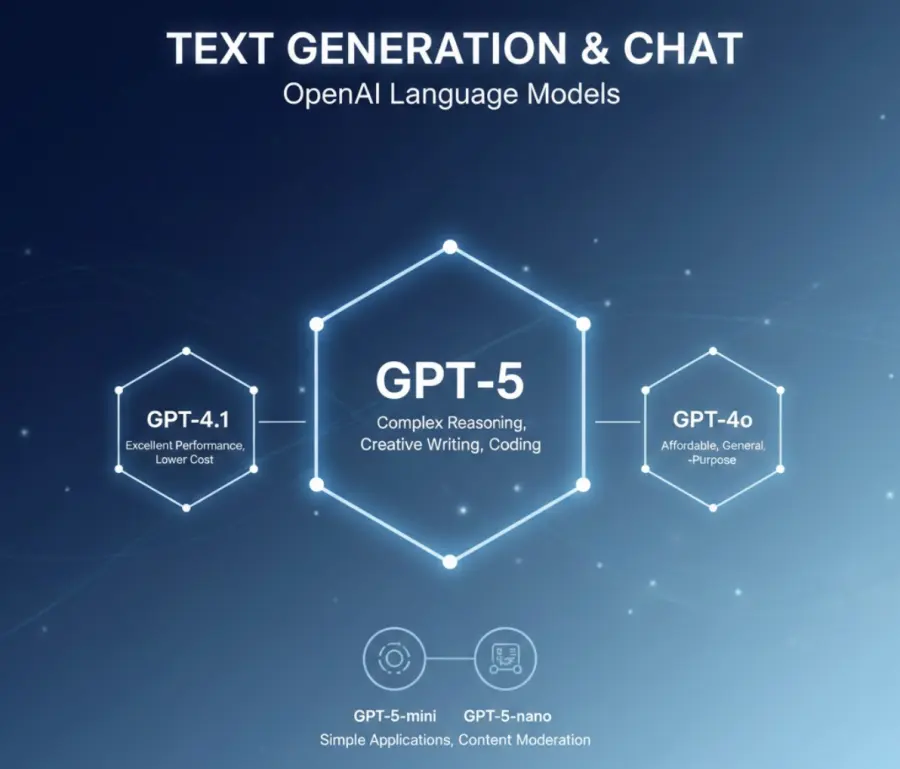

Text generation remains the most dominant and popular use case for the OpenAI API today. The current robust model lineup available through the OpenAI API includes powerhouses tailored for extreme reasoning and highly efficient alternatives tailored for speed. When you route text-based tasks through the OpenAI API, you tap into models trained on vast swaths of collective human knowledge. The OpenAI API excels at drafting professional emails, summarizing massive legal documents, and generating complex, bug-free code snippets.

Choosing the absolute best text model within the OpenAI API ecosystem requires balancing desired performance against your allocated budget. The premium flagship models integrated into the OpenAI API deliver unmatched reasoning for intricate, multi-step logic puzzles. Conversely, the budget-friendly tiers of the OpenAI API handle routine data classification tasks flawlessly at a fraction of the cost. Expert developers who thoroughly master the OpenAI API know exactly which specific model endpoint to call for distinct computing workloads.

Common enterprise applications powered daily by the OpenAI API include automated, highly conversational customer support desks and dynamic content management systems. You can systematically program the OpenAI API to analyze raw customer sentiment instantly across thousands of reviews. Furthermore, countless elite development teams leverage the OpenAI API to power internal, proprietary coding assistants. The sheer versatility of the OpenAI API establishes it as a universal, non-negotiable tool for modern software engineering.

Voice Integration with the OpenAI API

Voice technology recently took a massive, unprecedented leap forward with crucial updates pushed to the OpenAI API. Older architectural systems awkwardly chained together separate transcription and speech-generation tools, resulting in frustrating conversational delays. The newly upgraded real-time endpoints within the OpenAI API process native audio streams natively. This critical advancement means the OpenAI API immediately understands tone, raw emotion, and subtle, complex speech nuances.

Modern businesses are aggressively leveraging this specific audio feature of the OpenAI API to completely replace outdated, frustrating phone tree systems. When a frustrated customer calls a support line, the OpenAI API processes their spoken words and formulates an empathetic response in milliseconds. Amazingly, the OpenAI API can even switch spoken languages mid-sentence without missing a conversational beat. This remarkable linguistic flexibility makes the OpenAI API the ultimate foundational solution for global, multilingual customer service operations.

Visual Processing Within the OpenAI API

The profound utility of the OpenAI API is absolutely not limited to standard text and voice processing. By accessing the dedicated DALL-E visual endpoints through the OpenAI API, software developers can generate stunning, high-resolution original images programmatically. You simply send a highly descriptive text string payload directly to the OpenAI API, and it swiftly returns a completely unique image file. Digital marketing platforms heavily utilize this creative aspect of the OpenAI API to completely automate their daily social media asset creation pipelines.

Recent major improvements pushed to the visual side of the OpenAI API include significantly better text rendering within generated graphics. You can also seamlessly pass your own existing images straight into the OpenAI API for highly targeted, complex edits or dramatic artistic style transfers. This comprehensive multimodal flexibility firmly solidifies the OpenAI API as a complete, all-in-one creative suite for modern application developers.

Structuring Data with OpenAI API Embeddings

Running silently behind the scenes, the OpenAI API provides unmetered access to incredibly powerful mathematical embedding models. These specific foundational models convert raw text strings into dense, multi-dimensional numerical vectors. By heavily leveraging the OpenAI API for these data embeddings, you can successfully build highly accurate, context-aware semantic search engines. The OpenAI API actively powers the eerily accurate recommendation systems you encounter daily on modern, high-traffic e-commerce websites.

When you programmatically feed a massive product catalog into the OpenAI API embedding endpoint, it mathematically maps the invisible conceptual relationships between disparate items. This semantic mapping allows your software application to instantly surface highly relevant results even if the end-user searches for vague, poorly articulated concepts. The embedding feature of the OpenAI API remains arguably its most underrated, yet financially impactful, technical capability.

Navigating OpenAI API Pricing and Costs

Thoroughly understanding the underlying billing structure is absolutely critical before deploying the OpenAI API into a live production environment. The OpenAI API operates strictly on a transparent pay-as-you-go token consumption model. You are billed explicitly based on the precise volume of data you send into the OpenAI API (input tokens) combined with the data it dynamically generates (output tokens). Carefully and continuously monitoring your OpenAI API usage prevents unexpected, catastrophic budget overruns.

Current OpenAI API Model Tier Rates

Premium, bleeding-edge models accessed directly via the OpenAI API naturally carry significantly higher operational price tags. For instance, repeatedly querying the flagship reasoning model through the OpenAI API costs substantially more than utilizing its optimized miniature counterparts. However, the team behind the OpenAI API frequently drops token prices on older, highly stable models to remain fiercely competitive. Software developers must continually review the official OpenAI API pricing documentation to relentlessly optimize their infrastructure spending.

Using the OpenAI API for native audio and high-resolution image generation follows entirely different structural pricing rules. Audio voice processing routed through the OpenAI API is generally metered and billed per minute rather than per token. Meanwhile, calling the visual endpoint via the OpenAI API incurs a simple flat fee per individual image successfully generated. Understanding these highly distinct OpenAI API pricing buckets helps your finance team forecast monthly backend infrastructure costs with extreme accuracy.

Real-World OpenAI API Cost Scenarios

Consider a high-traffic, automated customer service bot powered entirely by the robust capabilities of the OpenAI API. If you lazily route 100,000 routine queries a month through a premium, overpowered OpenAI API model, your cloud costs could easily exceed several thousand dollars. However, by intelligently switching that exact same routine workload to a specialized budget OpenAI API model, you might miraculously reduce that monthly bill to under fifty dollars. This stark, undeniable financial contrast highlights exactly why deliberate model selection within the OpenAI API ecosystem is critical.

Another incredibly common enterprise scenario involves intelligently processing massive legal document libraries via the OpenAI API. Highly competent, smart developers heavily utilize the built-in context caching features native to the OpenAI API. When you intentionally send the exact same massive reference document to the OpenAI API multiple times, automated caching discounts immediately apply to your account. This specific, highly optimized OpenAI API feature can effortlessly slash your daily processing costs by up to 75% for highly repetitive analytical tasks.

Step-by-Step OpenAI API Setup Tutorial

Getting your local environment started with the OpenAI API requires only a few short minutes of basic configuration. First, you must register a dedicated developer account directly on the official platform to access the OpenAI API. Once successfully registered, navigate directly to the dashboard billing section to attach a corporate payment method, as the OpenAI API strictly requires active billing for live production access. Without a valid, active credit card on file, your programmatic OpenAI API calls will simply fail and return error codes.

Next, you must securely generate your unique, cryptographic OpenAI API keys. These specific alphanumeric keys act as your highly secure, unforgeable passport directly into the OpenAI API neural network. You must treat your active OpenAI API keys exactly like highly sensitive bank passwords. Never, under any circumstances, expose your OpenAI API keys in public code repositories or client-side JavaScript code, as malicious actors actively scrape the internet specifically for exposed OpenAI API credentials.

After successfully securing your encryption keys, immediately install the official software development kit tailored for the OpenAI API. The maintained Python library for the OpenAI API remains exceptionally popular among data scientists and backend engineers. Modern frontend web developers typically gravitate toward the highly optimized Node.js package built for the OpenAI API. These official, well-documented libraries completely abstract away the complex HTTP routing requests, making your initial OpenAI API integration incredibly smooth and error-free.

Finally, write your very first basic script to successfully ping the live OpenAI API. Construct a simple text prompt, programmatically pass it directly to the chat completion endpoint of the OpenAI API, and command your terminal to print the response. When you actually see the generated text magically appear in your command line terminal, you have successfully authenticated and communicated with the OpenAI API. From this basic, fundamental foundation, you can rapidly scale your OpenAI API usage to handle incredibly complex, enterprise-grade workflows.

Security and Compliance within the OpenAI API

Massive enterprise adoption of the OpenAI API hinges entirely on stringent, unyielding security measures. When securely routing highly sensitive corporate or user data through the OpenAI API, legal compliance absolutely cannot be an afterthought. Fortunately, the dedicated enterprise tier of the OpenAI API offers incredibly robust, legally binding data privacy guarantees. Unlike the public consumer web interface, private data sent via requests to the enterprise OpenAI API is explicitly and legally excluded from any future model training by default.

This strict, highly enforced zero-retention policy makes the OpenAI API exceptionally attractive to highly regulated sectors like modern healthcare and global finance. Large organizations can securely configure the OpenAI API to meet strict, uncompromising HIPAA compliance standards. Furthermore, the massive cloud infrastructure securely hosting the OpenAI API maintains comprehensive, annually audited SOC 2 Type II certifications. Relying completely on the OpenAI API means your internal legal and compliance teams can breathe incredibly easy knowing corporate data is securely encrypted both in active transit and at rest.

Granular access control located within the OpenAI API developer dashboard allows system administrators to seamlessly implement strict permission boundaries. You can easily generate heavily scoped API keys for the OpenAI API that strictly allow only highly specific actions. If an external contractor only ever needs to access the semantic embedding endpoint of the OpenAI API, you can easily lock down their key accordingly. This granular, role-based control over the OpenAI API significantly minimizes the potential blast radius of dangerous credential leaks.

Handling Rate Limits and Errors in the OpenAI API

Building highly resilient, fault-tolerant applications on top of the OpenAI API fundamentally requires defensive programming techniques. As your application traffic scales globally, you will inevitably encounter strict rate limits heavily imposed by the OpenAI API. The protective OpenAI API architecture restricts the total number of requests per minute (RPM) and tokens per minute (TPM) directly based on your specific billing tier. Thoroughly understanding these highly specific computational limits is crucial for maintaining a completely stable, uninterrupted OpenAI API integration.

When you accidentally exceed your monthly allotted quota, the protective OpenAI API immediately returns a standard HTTP 429 status code. Novice, inexperienced developers often completely fail to catch these specific errors, causing their OpenAI API-dependent application features to crash entirely. Expert developers flawlessly implement mathematical exponential backoff algorithms when rapidly querying the OpenAI API. If the OpenAI API forcefully rejects a rapid request due to active rate limiting, your software system should automatically pause briefly before aggressively attempting the OpenAI API call again.

Actively monitoring the HTTP response headers routinely returned by the OpenAI API gives you critical real-time visibility into your rapidly depleting limits. Every single successful response from the OpenAI API includes highly precise metadata detailing your current token consumption metrics. By actively parsing these specific OpenAI API headers, your backend application can proactively slow down outbound requests before violently hitting a hard usage wall. This highly proactive management of the OpenAI API ensures a completely seamless, uninterrupted experience for your end-users.

Implementing RAG Architectures with the OpenAI API

Retrieval-Augmented Generation (RAG) strongly represents the most powerful, highly requested design pattern for the OpenAI API today. Standard baseline models accessed directly through the OpenAI API completely lack knowledge of your deeply proprietary internal business data. By intelligently combining rapid vector databases directly with the OpenAI API, you securely inject highly specific operational context directly into the model's active brain. The OpenAI API strongly excels at seamlessly synthesizing this freshly injected data into coherent, remarkably accurate responses.

The highly complex RAG workflow relies extremely heavily on the robust embedding capabilities of the OpenAI API. First, you continuously pass your massive internal documents straight through the OpenAI API embedding endpoint to thoroughly vectorize them. When a corporate user asks a question, your system searches your internal vector database for highly relevant text chunks. You then systematically bundle those precise chunks alongside the user's query and send the entire massive package directly to the OpenAI API for final, intelligent processing.

This highly specific data architecture successfully prevents the OpenAI API from accidentally hallucinating incorrect, fabricated facts. Because the OpenAI API is strictly constrained by the hard context you actively provide, the programmatic outputs become remarkably and consistently reliable. Global legal firms heavily use the OpenAI API within secure RAG setups to instantly query hundreds of thousands of complex case files. The seamless combination of your proprietary data and the supreme reasoning engine of the OpenAI API creates a truly insurmountable competitive advantage.

Customizing Behavior: Fine-Tuning the OpenAI API

While basic prompt engineering cleanly solves most routine problems, sometimes you desperately need the OpenAI API to adopt a highly specific persona or rigid output structure. This exact scenario is where the advanced fine-tuning capabilities of the OpenAI API truly shine. Fine-tuning securely allows you to permanently train a custom model variant that remains hosted securely within the OpenAI API infrastructure. You simply upload a pristine dataset of thousands of perfect input-output examples directly to the OpenAI API.

The highly advanced OpenAI API then uses this specific dataset to carefully adjust the underlying mathematical model weights slightly. The resulting custom model, accessed securely via the OpenAI API, responds much faster and strictly requires significantly shorter contextual prompts. This optimization drastically and permanently reduces the token costs associated with your heavy daily OpenAI API usage. Fine-tuning directly through the OpenAI API is exceptionally effective for highly specialized tasks like proprietary code generation or highly complex medical text summarization.

Maintaining a highly performant fine-tuned model via the OpenAI API completely requires ongoing iteration and monitoring. As your core business needs organically evolve, you should continuously upload newly generated training data to the OpenAI API. The advanced analytical tools provided natively by the OpenAI API make it incredibly simple to thoroughly evaluate the exact performance of your custom models against standard baseline metrics. This constant, relentless refinement ensures your dedicated OpenAI API endpoints continually remain highly optimized over long periods of time.

Advanced Strategies for OpenAI API Optimization

Mastering Prompt Caching in the OpenAI API

Context caching remains arguably the most powerful, direct cost-saving mechanism built heavily into the OpenAI API. Whenever you repeatedly pass a massive system prompt directly to the OpenAI API, it requires significant cloud compute to process. By smartly structuring your programmatic requests to actively utilize the OpenAI API caching layer, you only ever pay the massive full price for the initial data read. Subsequent high-volume OpenAI API calls that utilize the exact same massive context window automatically receive massive, highly impactful financial discounts.

Strategic OpenAI API Model Routing

Do not lazily rely on a single, massive model for all your high-volume OpenAI API requests. Build an intelligent software routing layer within your application that actively evaluates the strict complexity of a user's prompt before aggressively hitting the OpenAI API. Systematically send trivial, routine questions straight to the absolute cheapest OpenAI API endpoint currently available. Strictly reserve the absolute most expensive, highly capable OpenAI API models only for critical tasks that require deep, complex logical reasoning.

Batch Processing with the OpenAI API

For large-scale, asynchronous computing tasks, the dedicated batch endpoint of the OpenAI API is a massive financial lifesaver. If you are systemically summarizing tens of thousands of complex user reviews overnight, you absolutely do not need instant, real-time responses from the OpenAI API. Systematically submit these massive workloads directly to the asynchronous batch queue of the OpenAI API. The highly efficient OpenAI API seamlessly processes these lower-priority computing tasks during global off-peak hours, automatically granting you a steep discount on standard token rates.

Refining Prompts for the OpenAI API

Highly verbose, conversational prompts force the OpenAI API to uselessly process completely unnecessary text tokens. Vigorously audit your internal system instructions regularly to ensure they remain exceptionally crisp, concise, and highly direct. Explicitly instruct the OpenAI API to strongly respond in highly structured data formats, like strict, highly valid JSON arrays. This strict architectural requirement completely prevents the OpenAI API from generating useless conversational filler, which directly and immediately reduces your total output token expenditure.

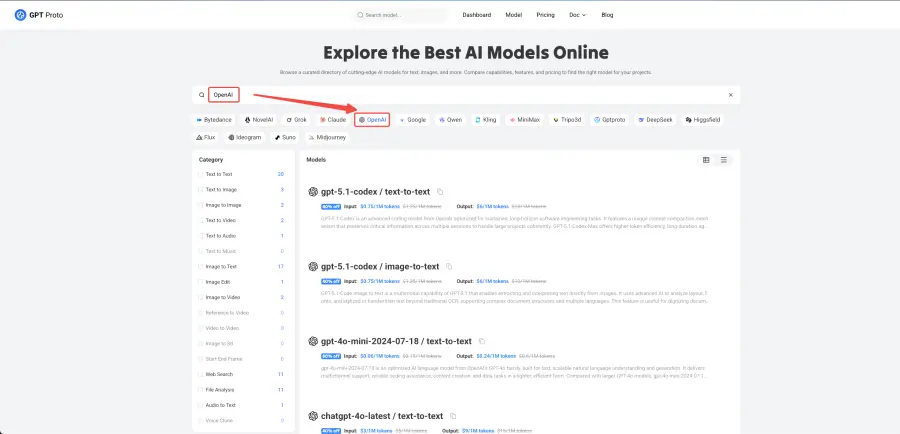

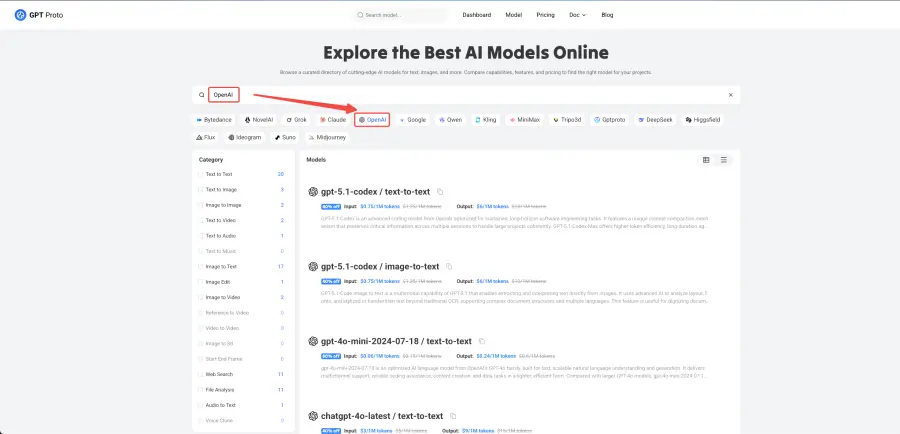

Alternative Multiple AI API Platforms

While the standalone OpenAI API remains incredibly powerful, some highly experienced developers vastly prefer robust aggregation platforms that securely offer multiple models in one highly centralized place. Unified tools like the GPT Proto API act as a comprehensive, highly flexible solution that seamlessly connects your application to top foundational AI models through a single, highly stable interface. This completely bypasses the need to strictly lock your application exclusively to the OpenAI API.

This centralized routing approach offers several massive architectural advantages. You can easily compare complex outputs from the OpenAI API against competing mathematical models in real-time. You can programmatically switch active providers based entirely on your immediate needs, managing all your varied cloud usage directly from one unified dashboard. The highly flexible pay-as-you-go financial model makes it incredibly cost-effective for smart developers who demand supreme flexibility without permanently committing to the OpenAI API ecosystem entirely.

Final Thoughts on the OpenAI API

Mastering the incredible depths of the OpenAI API is absolutely an essential, non-negotiable skill for modern software engineers. The raw, immense computational utility provided directly by the OpenAI API allows incredibly small startup teams to seamlessly build software products that easily rival massive enterprise software suites. By thoroughly understanding exactly how the OpenAI API mathematically structures its highly complex pricing, you can securely scale your software applications aggressively without bankrupting your startup.

Always rigorously monitor your active OpenAI API dashboards meticulously to catch anomalous usage spikes incredibly early. Continuously and aggressively test newly released models the absolute second they drop on the live OpenAI API network. The AI landscape shifts dramatically every single month, and constantly optimizing your secure OpenAI API integration remains a highly critical ongoing process. Fully embrace the immense computing power of the OpenAI API, diligently implement strict operational cost controls, and confidently watch your software application's native capabilities soar.