The landscape of artificial intelligence is shifting from static knowledge bases to real-time information retrieval. For developers seeking to build applications grounded in current data, the Perplexity AI API has emerged as a critical tool in 2025. By leveraging the Sonar model family, this interface offers live web search capabilities complete with verifiable citations. However, navigating its unique pricing structure and technical implementation requires a strategic approach. This guide explores the features, costs, and integration steps for the Perplexity API, while also highlighting unified alternatives like GPT Proto for optimized AI access.

Perplexity AI API 2025: Pricing, Features & Integration

Discover everything about Perplexity AI API in 2025. Learn about features, pricing, setup with Pro account, pros & cons, and why GPT Proto might be your better alternative for unified AI access.

The Evolution of Online LLMs

In the early stages of the generative AI boom, developers faced a significant hurdle: the "knowledge cutoff." Models were brilliant but stuck in the past, unable to comment on yesterday's news or today's stock prices. The launch of the Perplexity AI API changed this dynamic effectively. By marrying the reasoning capabilities of large language models with a proprietary search index, Perplexity offered a solution that didn't just hallucinate answers—it researched them.

As we move through 2025, the demand for "grounded" AI—systems that cite their sources—is non-negotiable for enterprise and consumer applications alike. Whether you are building a financial analysis bot, a dynamic travel planner, or an automated news aggregator, understanding how to leverage the Perplexity AI API is essential for delivering accuracy and trust.

Understanding the Perplexity Ecosystem

Perplexity AI began as a consumer-facing "answer engine," challenging the dominance of traditional search engines by providing direct, synthesized answers rather than a list of blue links. The core value proposition was simple: reduce the time it takes to find the truth.

For developers, the Perplexity AI API exposes this same infrastructure programmatically. It allows you to send a query, have an AI model scour the internet in real-time, read multiple sources, and return a cohesive answer with inline citations. This process, often referred to as RAG (Retrieval-Augmented Generation) as a Service, removes the complex engineering burden of building your own web scrapers and vector databases.

Unlike standard LLMs that rely solely on training data, the Perplexity AI API is dynamic. If a major event happens this morning, the API knows about it by this afternoon. This capability is powered by their Sonar model family, which are fine-tuned versions of open-source models (like Llama 3) optimized specifically for search and summarization tasks.

Core Features of the Perplexity AI API

1. Real-Time Web Grounding

The standout feature is the "online" capability. When you make a request to the Perplexity AI API, the system performs a live search across its index. It filters out low-quality SEO spam and focuses on authoritative sources. This ensures that the generated text is not just linguistically coherent but factually accurate according to the latest available data.

2. Verifiable Citations

Trust is the currency of the AI era. In many professional settings, an answer without a source is useless. The API returns a structured list of citations used to generate the response. This allows developers to display footnotes or hyperlinks in their UI, giving end-users the ability to verify claims instantly. This transparency is crucial for applications in legal, medical, or academic domains.

3. The Sonar Model Family

Perplexity offers different tiers of models to balance speed, cost, and intelligence:

- Sonar: A lightweight, fast model ideal for simple queries and quick lookups. It is cost-effective and low-latency.

- Sonar Pro: A larger, more reasoning-heavy model. It performs deeper research, handles complex multi-step queries, and generally provides more nuanced answers. It is best suited for deep-dive research tasks.

4. OpenAI Compatibility

To reduce friction for developers, the Perplexity AI API adheres to the industry-standard OpenAI API format. If you have an existing application built on GPT-4, switching to or adding Perplexity is often as simple as changing the `base_url` and the `model` name in your code. This interoperability significantly lowers the barrier to entry.

Detailed Pricing Analysis

Pricing for the Perplexity AI API differs from standard LLM providers because it involves two distinct cost centers: compute (tokens) and search (requests).

The Dual-Cost Structure

Most AI APIs charge strictly per million tokens. Perplexity charges for tokens, but also applies a flat fee for every search request performed. For example:

- Token Costs: You pay a set rate for input tokens (what you send) and output tokens (what the AI writes).

- Request Fees: There is an additional cost (e.g., $5 per 1,000 searches) for the "online" functionality.

This structure means that short, frequent queries can become expensive quickly due to the request fee, even if the token count is low. Conversely, long, complex reports are more efficient relative to the request fee. Developers must carefully model their usage patterns. If your app generates thousands of searches a day, the request fees will likely constitute the bulk of your invoice.

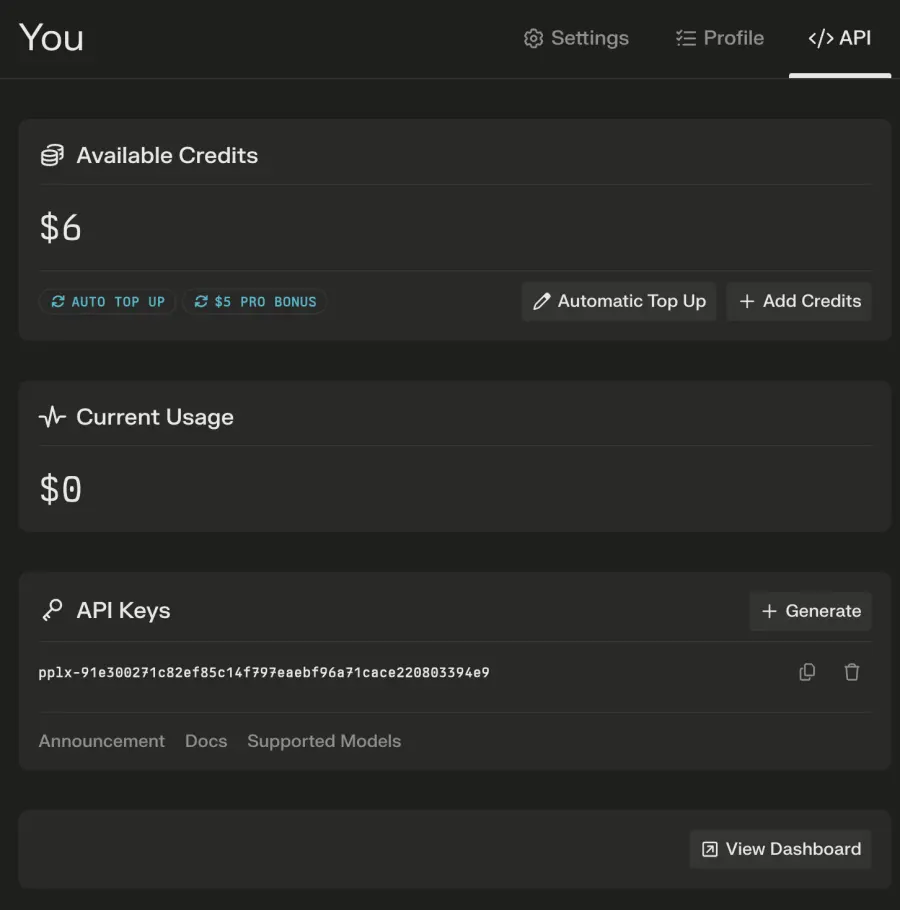

Managing Your Budget

Pro subscribers to the consumer service often receive a small monthly credit (e.g., $5) toward API usage. However, for production-scale applications, you will need to add a credit card and purchase credits in advance. This prepaid model ensures you never accidentally run up a massive bill, but it also requires monitoring to prevent service interruptions if your balance hits zero.

Pros & Cons for Developers

Advantages

- Freshness: There is no better option for up-to-the-minute data. If your use case depends on current events, stock movements, or breaking news, the Perplexity AI API is superior to standard GPT-4 or Claude models.

- Sourcing: The built-in citation engine saves developers from building complex retrieval pipelines. You get the answer and the proof in a single API call.

- Speed of Implementation: Because it handles the "searching" and "reading" internally, you don't need to manage headless browsers, proxies, or scraper bots.

Disadvantages

- Cost Complexity: The additional fee per search request makes it harder to predict monthly spend compared to token-only models.

- Rate Limits: Depending on your tier, you may face stricter rate limits than other providers, which can hamper high-velocity applications.

- Dependency: You are relying on Perplexity's search index. If their crawler hasn't indexed a specific niche site yet, the model won't know about it.

Step-by-Step Integration Guide

Ready to build? Integrating the Perplexity AI API is straightforward thanks to its standardized design. Follow these steps to get your first request running.

Step 1: Account Setup & Payment

Navigate to the Perplexity settings page. You do not strictly need a Pro subscription to use the API, but you must set up a payment method. The API operates on a credit system, so you will need to "top up" your account (e.g., buy $10 or $50 worth of credits) before you can generate keys.

Step 2: Generating Your API Key

Once your balance is positive, go to the API section of the dashboard. Click "Generate API Key." Be sure to copy this immediately and store it in your `.env` file or a secrets manager. For security, never hardcode this key into client-side applications.

Step 3: Making Your First Request

You can use standard HTTP libraries or the OpenAI Python SDK. Here is a conceptual example of how a request looks:

POST https://api.perplexity.ai/chat/completions

Content-Type: application/json

Authorization: Bearer YOUR_API_KEY

{

"model": "sonar-pro",

"messages": [

{"role": "system", "content": "Be precise and cite sources."},

{"role": "user", "content": "What are the top AI trends in Q1 2025?"}

],

"temperature": 0.2

}

The response will contain the `content` (the answer) and a `citations` field containing an array of URLs used to construct that answer.

The Unified Alternative: Why Choose GPT Proto?

While the Perplexity AI API is powerful, relying on a single vendor for critical infrastructure introduces risks regarding uptime, price hikes, and model availability. This is where unified API platforms allow for a more robust architecture.

Solving the Vendor Lock-in Problem

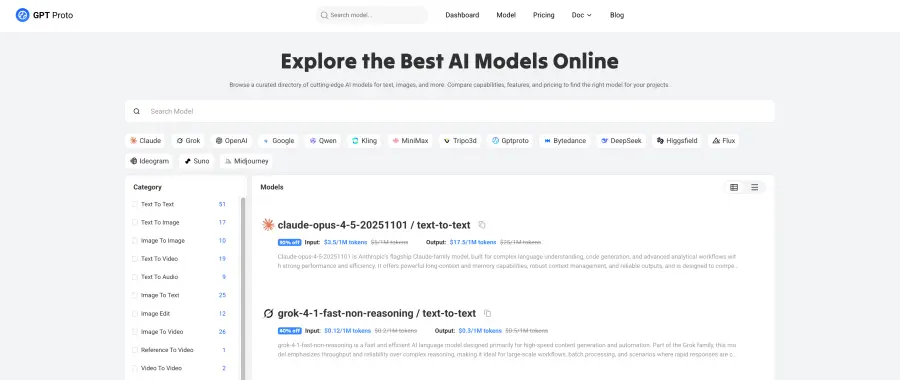

Developing directly against one provider means that if they change their pricing or suffer an outage, your application breaks. GPT Proto acts as a universal gateway, giving you access to Perplexity's models alongside 200+ other state-of-the-art models from OpenAI, Anthropic, and Google—all through one single API key.

Cost and Performance Optimization

By using a unified platform, you can route traffic intelligently. For example, you might use the Perplexity AI API specifically for queries that require live web search, while routing creative writing or coding tasks to Claude 3.5 or GPT-4o, which might be cheaper or better suited for those specific tasks. GPT Proto often secures volume discounts, allowing developers to access these premium models at significantly lower rates—sometimes up to 40% cheaper than direct provider pricing.

Key Benefits of Unified Access

- Single Billing: Instead of managing invoices from OpenAI, Anthropic, and Perplexity separately, you get one consolidated bill.

- High Availability: If one provider experiences downtime, you can instantly switch models in your code without changing your API key or infrastructure.

- Global Low Latency: Platforms like GPT Proto utilize distributed edge networks to route your request to the fastest available data center, ensuring your app feels snappy to users worldwide.

Use Cases for Perplexity AI API

Financial Market Analysis

Traders and financial analysts use the API to summarize earnings calls, track sentiment across thousands of news articles, and monitor real-time market movers. The ability to verify citations ensures that investment decisions are based on credible reports rather than hallucinations.

Academic and Legal Research

For paralegals and students, the API can quickly synthesize case law or academic papers available online. The citation feature allows for rapid cross-referencing, cutting down the initial research phase from hours to minutes.

Content Creation & Fact-Checking

Writers and editors use the API to verify facts in articles or to generate content briefs based on current trending topics. It serves as an automated research assistant that provides the "who, what, when, and where" instantly.

Frequently Asked Questions

Is the Perplexity AI API included in the Pro subscription?

Not entirely. While Pro subscribers get a small monthly credit, the API is billed separately based on usage. It is a pay-as-you-go service designed for developers, distinct from the fixed monthly fee of the consumer chat interface.

How does the citation system work?

When the model generates an answer, it assigns indices to specific claims. The API response includes a list of URLs corresponding to these indices. Developers can then parse this data to render clickable footnotes in their applications.

Can I use the API for free?

No, there is no permanent free tier for the API. You must purchase credits to use it. However, the cost of entry is low, allowing you to start testing with just a few dollars.

Is it better than using a search API and GPT-4 separately?

Using the Perplexity AI API is generally faster and easier to implement. Building your own system requires chaining a search API (like Bing or Google) with an LLM, managing the context window, and handling web scraping. Perplexity packages this entire workflow into a single call.

Conclusion

The Perplexity AI API represents a significant leap forward for developers who need their applications to be aware of the world in real-time. Its ability to provide grounded, cited answers resolves one of the biggest complaints about generative AI: trust. With features like the Sonar Pro model and seamless OpenAI compatibility, it is an attractive option for building modern, intelligent tools.

However, the complexity of its dual pricing model and the risks associated with single-vendor dependency cannot be ignored. For many development teams, leveraging a unified interface like GPT Proto offers the best of both worlds: access to Perplexity's powerful search capabilities when needed, combined with the flexibility, cost savings, and redundancy of a multi-model ecosystem. As we navigate 2025, the most successful AI applications will be those that can dynamically choose the right model for the right task, balancing accuracy, speed, and budget effectively.

All-in-One Creative Studio

Generate images and videos here. The GPTProto API ensures fast model updates and the lowest prices.

Start Creating